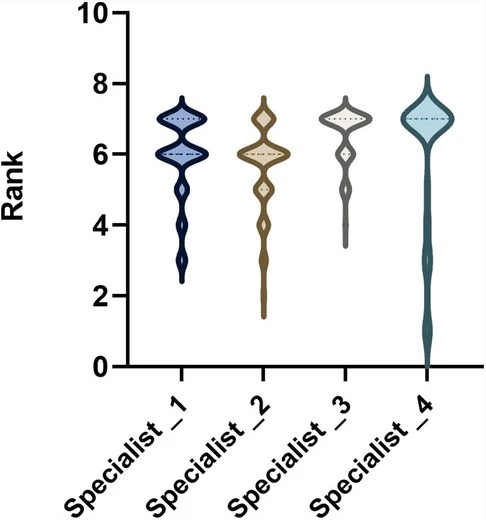

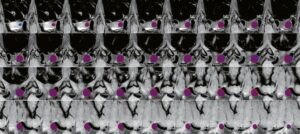

In our recent study published in European Radiology, we evaluated the reliability of ChatGPT – an AI system developed by OpenAI – as a referral tool for imaging tests, compared to ESR iGuide, a clinical decision support system (CDSS) developed by the European Society of Radiology in cooperation with the American College of Radiology. Four experts served as our ground truth evaluators.

As AI systems become increasingly prevalent in healthcare, it is important to rigorously assess their capabilities and limitations.

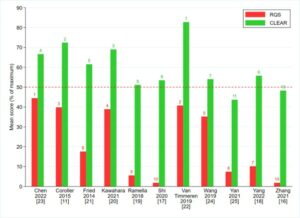

Our results demonstrate that while ChatGPT performed reasonably well on standard referral scenarios (see featured image), it struggled with more complex cases involving subtle clinical findings or ambiguous presentation. This reinforces the point that current AI technology should be considered as an aid rather than a replacement for clinical judgment. As with any diagnostic tool, understanding the strengths and weaknesses is key to appropriate usage.

A major teaching point from our study is the importance of comprehensive evaluation before integrating emerging technologies into practice. ChatGPT and similar generative models hold promise, but more progress is still needed, especially around handling ambiguity and atypical cases. Ongoing assessment against validated clinical standards, as we performed with the CDSS, will be important to guide development and ensure patient safety. AI shows great potential to augment clinicians, but closer scrutiny is warranted to define appropriate uses that maximize benefits while avoiding unintended consequences. Overall, our findings serve as a valuable data point on both ChatGPT’s current abilities as well as best practices for AI evaluation going forward.

Key points

- ChatGPT recommendations were highly consistent with the recommendations provided by the ESR iGuide.

- ChatGPT’s ability in guiding the selection of appropriate tests may be comparable to some degree with ESR iGuide’s.

Authors: Shani Rosen & Mor Saban