With the increased use of artificial intelligence (AI) in every segment of life including healthcare, patient data is being collected massively and shared by different stakeholders. But who has control over it? The hospital, the patient, the equipment provider, the software developer, the state’s authorities? If no one knows who controls the data, what can happen? Peter van Ooijen, a computer scientist from Groningen, NL, delved into these brand new, challenging questions that will shape radiology practice during the ESR AI meeting last April in Barcelona.

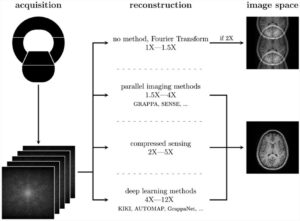

In machine and deep learning, the algorithm rules are no longer put into the system by a human observer; instead, the machine uses input data and known outcomes as training data to develop the algorithm. Data quality is paramount since the development of the algorithm is directly linked to the quality of the data collected.

An algorithm’s development is a complex process, which also makes them vulnerable, van Ooijen told a packed audience. “In January 2019, Yisroel Mirsky’s team proved that AI was susceptible to being attacked by malicious hackers and, by introducing or modifying the current database of the algorithm, a well-trained algorithm could be ruined and become unusable, with great danger to the patient’s health,” he said.

The machine can´t distinguish between good and bad data quality. Data input should thus always be high-quality data, to avoid degrading the algorithm.

Don’t blindly rely on AI

“If you start to rely too much on those systems, you’re not in control anymore,” van Ooijen continued.

AI-based tools cannot deal with anything unexpected, because they have not been trained in every situation. On the Internet, hundreds of examples can be found in which AI-fueled machines ended up in an undesirable situation just because they failed to adapt their trained reactions to a real-world changing environment. The Japanese car driver pushing his into the bay illustrates what happens when one blindly follows a machine (2).

The doubt remains if AI is even made to improvise in unexpected situations, he added. “Will they react in the same direction we think is normal and morally acceptable by society? Or will AI react in an alternative direction, which we didn´t expect?”

Elon Musk, Bill Gates, and Steve Wozniak have all warned that superintelligence could be a serious danger for humanity, possibly more dangerous than nuclear weapons (1). “If we are not linking AI to the core values of our society, sooner or later it will cause damage. Worse case, it could control what we know, what we think, and how we act.”

Be in control when using AI: an illusion?

In healthcare, data is collected with patients agreeing to share their data for research purposes, and thus train algorithms with relevant and quality data. While databases must be extended to create large neural networks for research, citizens must also be given rights over their data.

Citizens in the European Union (EU) have a series of rights that are covered by the General Data Protection Regulation (GDPR); for instance, the right to access, rectify, move, object, and erase their data. They can also restrict processing, automated decision-making, and profiling of their data.

Parallel to the existing regulations to help protect their data, people continue to expose and spread their data across channels that do not guarantee their rights are being respected. “We still make backup copies, send emails, files, copy CDs, etc. So we lose control of the data and many of the rights, such as the right to be forgotten and erased from the system, are violated,” van Ooijen said.

Using AI is a further complication because once the data is put into the system, no one knows what happens. “It is a black box. We don´t know what is happening with the data any more, how the algorithm works, and what AI is doing with the data.”

What about property rights once AI has transformed the data? The EU has acknowledged the importance that AI-fueled machines and robots will have in the future, calling for the consideration of a Civil Law Rule of Robots in 2017. Intellectual property rights could follow, given that the European Parliament’s resolution recognizes the need for a specific legal status for robots. In Saudi Arabia, a robot called Sophia was recently given citizenship, entitling it to a series of rights (3).

The data extends globally, which will challenge its management. According to an EMC publication, there were 4.4 zettabytes (1 zettabyte= 1 trillion Gb) of digital data stored worldwide in 2013; by 2024, this number will climb to 44 zettabytes.

“This is not to scare off the audience about AI, but we must be aware of the risks associated with a technology that has enormous potential to save lives, in order to make radiology procedures more accurate and patient-oriented,” van Ooijen concluded.

- http://alturl.com/zxcxm

- https://gizmodo.com/tourists-follow-car-gps-into-a-body-of-water-5893882

- https://www.dw.com/en/saudi-arabia-grants-citizenship-to-robot-sophia/a-41150856

Peter van Ooijen, MSc PhD, is an associate professor in medical imaging informatics at the department of radiation oncology at the University Medical Center Groningen, where he is also the coordinator of the Machine Learning Lab which is part of the Data Science in Health (DASH) unit. He is a board member at EuSoMII and has been involved in Medical Imaging Informatics research for over 20 years and co-published over 150 peer-reviewed publications, of which 125 are listed in PubMed. He is a well-received invited lecturer at many conferences and courses worldwide and editorial board member of PLOS ONE, European Radiology Experimental. He holds his University Teaching Qualification in the Netherlands and is a CPHIMS.

Peter van Ooijen, MSc PhD, is an associate professor in medical imaging informatics at the department of radiation oncology at the University Medical Center Groningen, where he is also the coordinator of the Machine Learning Lab which is part of the Data Science in Health (DASH) unit. He is a board member at EuSoMII and has been involved in Medical Imaging Informatics research for over 20 years and co-published over 150 peer-reviewed publications, of which 125 are listed in PubMed. He is a well-received invited lecturer at many conferences and courses worldwide and editorial board member of PLOS ONE, European Radiology Experimental. He holds his University Teaching Qualification in the Netherlands and is a CPHIMS.